Chapter 1

Introduction

SW is a many-faced entity, a colossal structure of standards and resources. It is also an idea shared by a multitude of communities, a concept of structured information, and an abstraction of knowledge. It is a mixture of technologies, created over a decade of work by professionals. Academia researches it, businesses try to create common ground with it, and visionaries preach of its promises; A richer world, where computer-driven agents find, process, and act upon information tailored for our need.

At the center of the SW we have the W3C, led by Tim Berners-Lee. Berners-Lee is perhaps more famous for his invention, the WWW, and he is also the one who coined the phrase SW. It is in his writings of Design Issues we find the essence of SW, namely the sentence "The Semantic Web is a web of data, in some ways like a global database" [5].

The web of data has been in the making since the late 1990s, but in terms of traction there is still much to be done. Some complain it is still very much an academic affair, while others complain of the lack of interest from the developing community.

This master thesis has taken the approach to look at the gap between SW and the developing community by trying to construct a framework that offers tools to access SW. It has been written in and for JS, as it is a programming language of the web, and the timing seems right.

JS can relate to SWs struggles for traction. For long time it was ridiculed by developers, saying it was a silly language that merely created fancy effects on web pages, but not doing anything useful. Douglas Crockford, an evangelist of JS, has called JS the world's most misunderstood language [15]. And if the name and its syntax was not confusing enough, the browsers with their differing implementations were not making it any easier.

There were, and still are, many reasons to why people get confused by JS. But in the mid-2000s, efforts were made to make JS more accessible to developers. Prototype, MooTools, and jQuery are all frameworks that promises APIs for easier, cross-browser access to the power within JS. And it worked! Readily manipulation of the DOM, asynchronous fetching of resources with AJAX, and the increasing efforts of making JS into a full-fledged server-side programming language, are making JS a powerful and fun tool for developers to work with.

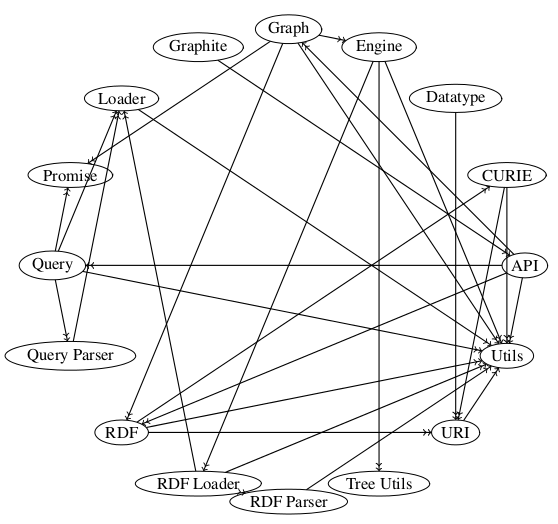

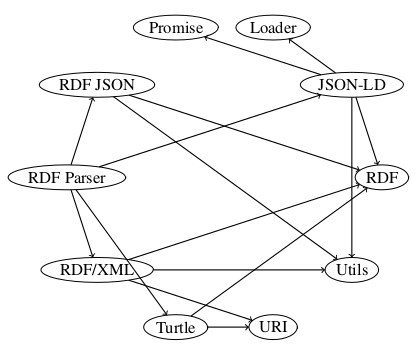

It is this fertile ground the work of this master thesis is trying to tap into. This work presents Graphite, which is the authors main contribution. It is an AMD-based framework (described in section 2.2.8.3) written in JS that sports a modularized API to fetch resources in the SW, process it and output in useful way for JS-developers. Frameworks typically serve to implement (larger-scale) components, and are implemented using (smaller-scale) classes [31]. This description of frameworks suits my implementation well, as the work in large part will consist of defining smaller components and have them collaborate effectively for a higher-level purpose.

This master thesis will describe the work and choices made during the implementation of Graphite. It is divided into three parts. The first consists of the underlying theory and constraints in technology (chapter 2), and how this fits into the scope of this thesis (chapter 3). The second part describes the implementation, and starts by explaining which tools and third party libraries I made use of (chapter 4 and 5 respectively). It continues with an extensive presentation of the framework itself (chapter 6) and a demo I constructed to demonstrate some of the frameworks' capabilities (chapter 7). Finally, in the third part I offer a discussion of the work (chapter 8), and a conclusion of the matter (chapter 9).

I hope to contribute to the developing community of SW and JS in two ways; through the thesis, to showcase what is already available and present some research and thoughts of my own, and through the framework, in the hopes that it contributes to the evolution of handling SW in JS.

Part 1

Foundations

Chapter 2

Background

This chapter will describe the technologies, standards, and theories that Graphite has been build upon.

2.1 SW

SW represents a multitude of standards and technologies, and seeing the whole picture may not be so easy to grasp. A perhaps fitting metaphor is the story of the elephant and the blind men. It is a story made famous by the poet John Godfrey Saxe, and tells the story of how six men tried to describe an elephant. Depending on which part they touched, each described the elephant differently. One approached its side, and called it a wall. Another touched the tusk, and surely it had to be a spear. The third took hold of the trunk, and spoke of how it resembled a snake. The fourth reached out for its knee, and stated it had to be like a tree. The fifth touched the ear, and meant it had to be like a fan. Finally, the last one had grabbed its tail, and stated how it had to be like a rope [34].

In comparison, here are some of the descriptions we have of SW:

- A web of data [5].

- An extension of WWW [25].

- A killer app [9].

- W3C's vision of the Web of linked data [50].

The list above are some of the descriptions in literature, and they are all true. Other aspects of SW is the set of standards it sports (e.g. RDF, RDFS, OWL, and SPARQL), technological foundations (e.g. LD), applicabilities (e.g. use of LOD amongst governments), social consequences (democratizing data), limitations (e.g. AAA), and more.

2.1.1 RDF

At the heart of SW lies RDF. It is a formalized data model that asserts information with statements that together naturally form a directed graph. Each statement consists of one subject, one predicate, and one object, and are hence often called a triple. The three elements have meanings that are analogous to their meaning in normal English grammar[24,p. 68-69], i.e. the subject in a statement is the entity which that statement states something about.

As an example of statements, take the following:

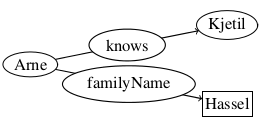

- Arne knows Kjetil.

- Arne has last name Hassel.

These statements are represented as a graph in figure 2.1. It illustrates that the subject "Arne" is related to the object "Kjetil" by the predicate "knows", and to the object "Hassel" by the predicate "familyName".

You might have noticed that the two objects have different shapes, one being a circle (like the subject), and the other being a rectangle. That is to show that "Hassel" is a literal. Literals are concrete data values, like numbers and strings, and cannot be the subjects of statements, only the objects[24,p. 69].

The circles on the other hand, are known as resources, and can represent anything that can be named. As RDF is optimized for distribution of data on WWW, the resources are represented with IRIs (IRI is an extension of URI, and is explained in section 2.1.4.2).

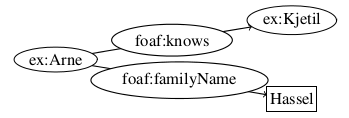

IRIs are usually declared into namespaces, to make terms more human-readable (e.g. resources in the namespace http://example.org/ could be prefixed ex). If we look at figure 2.1, we have two resources, namely Arne and Kjetil. To make these available as LD, we could assign them into the namespace ex, writing them respectively as ex:Arne and ex:Kjetil.

The basic syntax in RDF has a relatively minimal set of terms. It enables typing, reification, various types of containers (bags, sequences, and alternatives), and assigning of language or data type to a literal [2]. Its power lies in its extensibility by URI-based vocabularies [26]. By sharing vocabularies as standards between software applications, you can easier exchange data.

With this in mind, we see that figure 2.1 is faulty, and we turn to figure 2.2 to see a correct representation (using the vocabulary FOAF, prefixed foaf, for the properties).

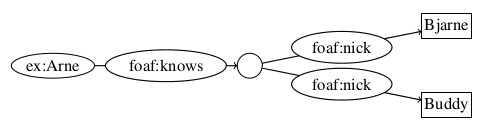

Not all resources are given IRIs though. The exception to the rule are BNs, which represent resources that have no separate form of identification [26], either because they cannot be named, or it is neither possible nor necessary at the time of modeling. These resources are not designed to link data, but to model relations of resources that are given IRIs.

An example of modeling BN is given in figure 2.3, where I have modeled that ex:Arne has a friend, who we do not know anything about except his nicknames, Bjarne and Buddy.

The figures 2.2 and 2.3 are examples of the form of visualization we will have of graphs in RDF.

2.1.2 RDFS

RDFS is an extension in form of vocabulary that extends the semantic expressiveness of RDF. But RDFS is not a vocabulary in the traditional sense that it covers any topic-specific domain [25,p. 46]. It is designed to extend the semantic capabilities of RDF, and in that sense it can be regarded as a meta-vocabulary.

The perhaps most important feature of RDFS is its ability to support taxonomies. It empowers the use of rdf:type by introducing rdfs:Class, in effect enabling classification. The properties rdfs:range, rdfs:domain, rdfs:subClassOf, and rdfs:subPropertyOf further extends this feature.

It also builds on the reification-properties of RDF, by instantiating rdf:Statement as a rdfs:Class. It continues by clarifying the semantics of rdf:subject, rdf:predicate, and rdf:object by instantiating them as rdf:Property, and in terms of entailment (explained in section 2.1.7) ties together with rdfs:range and rdfs:domain.

Another extension is the clarification of containers by introducing the class rdfs:Container and the property rdfs:containerMembershipProperty, which is an rdfs:subPropertyOf of the rdfs:member [13].

Finally, it introduces the utility properties rdfs:seeAlso and rdfs:isDefinedBy. The former represents resources that might provide additional information about the subject resource, while the latter gives the resource which defines a given subject. It also clarifies the use of rdf:value, to encourage its use in common idioms [13].

2.1.3 OWL

In the same way RDFS is an extension to RDF in order to express richer semantics, OWL is an extension to RDFS to express even richer semantics. It does so by introducing vocabularies that are based on formal logic, and aims to describe relations between classes (e.g. disjointness), cardinality (e.g. "exactly one"), equality, richer type of properties, characteristics of properties (e.g. symmetry), and enumerated classes [44,sec. 1.2].

As of this writing, OWL exists in two versions: The version recommended by W3C in 2004 (often known as OWL 1), and OWL 2, which became recommended in 2009. OWL 2 is an extension and revision of OWL 1, and is backward compatible for all intents and purposes [46].

OWL 1 features three sublanguages/profiles1. These are, with complexity in increasing order (all quoted from OWL Features [44]):

- OWL Lite: Supports classification hierarchy and simple constraints (e.g. only cardinality values of 0 and 1).

- OWL DL: Maximum expressiveness while retaining computational completeness and decidability.

- OWL Full: Maximum expressiveness and the full syntactic freedom of RDF, but with no computational guarantees.

OWL 2 also make a distinction with DL and Full. It does not list a Lite profile, but all OWL Lite ontologies are OWL 2 ontologies, so OWL Lite can be viewed as a profile of OWL 2 [47]. In addition, DL has three sublanguages that are not disjunct, and also does not cover the complete OWL 2 DL. These sublanguages are (all quoted from OWL 2 Profiles[47]):

- OWL EL: Designed to be used with ontologies that contain very large numbers of either properties or classes.

- OWL QL: Aimed at applications that use very large volumes of instance data, and where query answering is the most important reasoning task.

- OWL RL: Aimed at applications that require scalable reasoning without sacrificing too much expressive power.

To go through all differences between OWL 1 and OWL 2 would be beyond the scope of this thesis, but suffice to say is that OWL 2 is designed to be backward compatible with OWL 1, and the sublanguages OWL provides as a whole increases the reasoning capabilities of SW.

2.1.4 LD

A cornerstone of RDF is that all identifications (that is, except BNs) are IRIs. In this way, machines can browse the web for relevant resources, much like you browse the web through hyperlinks. This design feature makes RDF adhere to LD, which is a term that refers to a set of best practices for publishing and connecting structured data on the web [12].

Tim Berners-Lee have in his article about LD2 outlined four "rules" for publishing data on WWW [7]:

- Use URIs as names for things.

- Use HTTP URIs so that people can look up those names.

- When someone looks up a URI, provide useful information, using the standards (RDF, SPARQL).

- Include links to other URIs, so that they can discover more things.

These have become known as the "Linked Data principles", and provide a basic recipe for publishing and connecting data using the infrastructure of the WWW while adhering to its architecture and standards [12].

LD are reliant on two web-technologies, namely IRIs and HTTP. Using the two of them you can fetch any resource addressed by an IRI that uses the HTTP-scheme. When combining this with RDF, LD builds on the general architecture of the Web [43].

The Web of Data can therefore be seen as an additional layer that is tightly interwoven with the classic document Web and has many of the same properties [12]:

- The Web of Data is generic and can contain any type of data.

- Anyone can publish data to the Web of Data.

- Data publishers are not constrained in choice of vocabularies with which to represent data.

- Entities are connected by RDF links, creating a global data graph that spans data sources and enables the discovery of new data sources.

2.1.4.1 LOD

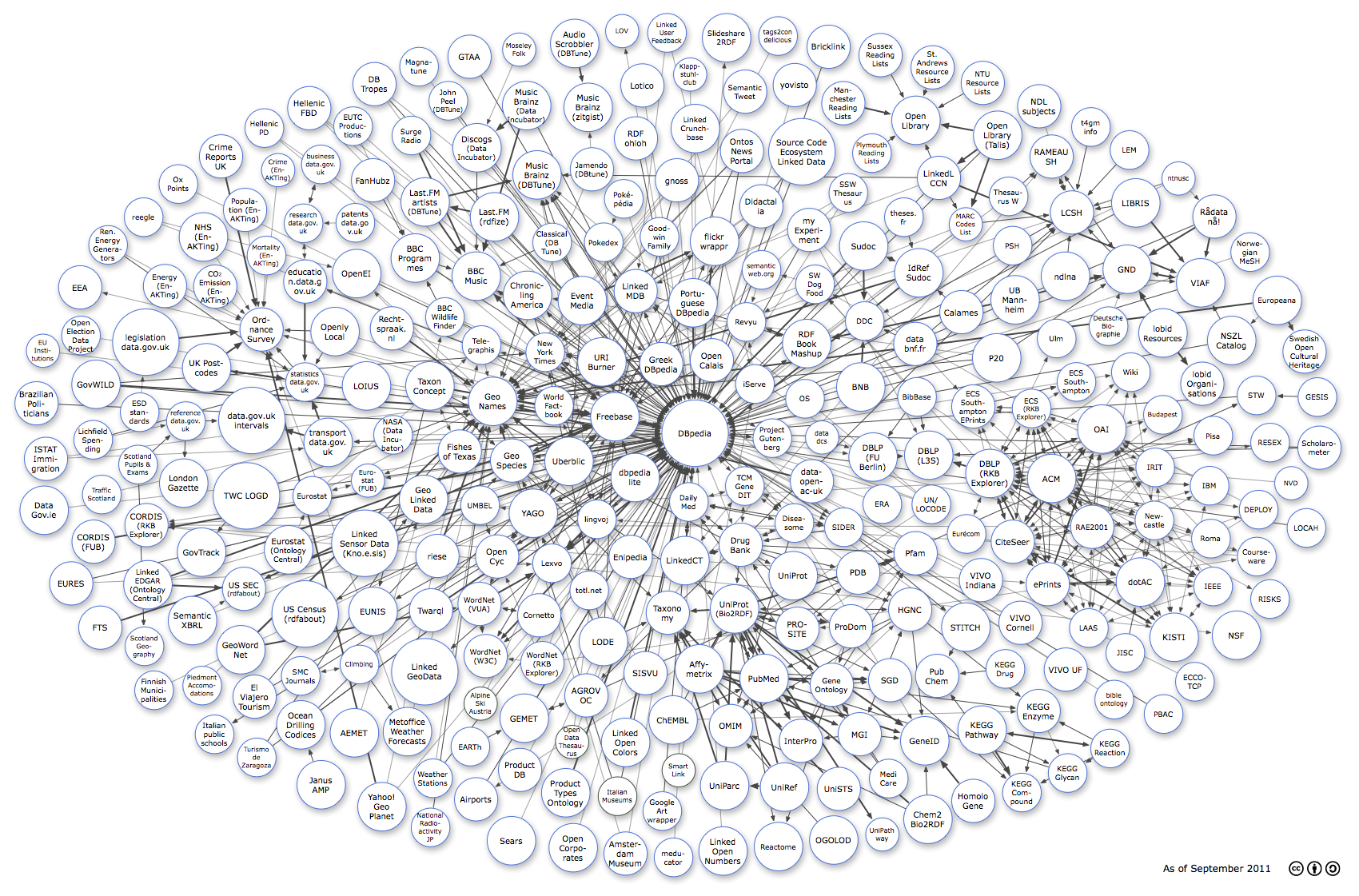

Based on the notion of LD, there is a movement to publish data on WWW as LOD. Especially toward governmental institutions there is now an increasing trend of opening data3.

To encourage this trend, Tim Berners-Lee published a star rating system. On a scale from one to five stars, it rates how well the given dataset is in becoming open. It is incremental, meaning that the dataset needs to be have one star before it can be given two. One star is given if your data is available on WWW with an open license. Two stars means that your data is available in machine-readable structure, and is valid for another star if the structure is a non-proprietary format (e.g. CSV instead of Excel). Four stars are given if your the data is identified by using open standards from W3C (e.g. RDF and SPARQL). The last star means that your data also link to other people's data, in order to provide context [7].

Figure 2.4 shows the linking open data cloud diagram. It illustrates to some extent the magnitude of data that are linked as of yet4.

2.1.4.2 URL vs. URI vs. IRI

Throughout this thesis you will read the terms URLs, URIs, and IRIs being used interchangeably. I strive to use IRI as it is the term fronted in the newest specs by W3C, but in some cases it is more appropriate to use the others because of the texts they reference.

URLs and URIs are the most commonly used terms. The former denotes dereferenceable resources on WWW, while the latter is a generalization that can denote anything that can be identified, even resources not on WWW. But URIs are limited to the character-encoding scheme ASCII, and as such IRI has been introduced to solve this problem5.

URI have the form scheme:[//authority]path[?query][#fragment], where the parts in brackets are optional. The list below explains the different terms (shortened versions of the ones offered by Hitzler et.al. [25,p. 23]. The explanations are equally valid for URL and IRI.

- scheme: The scheme classify the type of URI, and may also provide additional information on how to handle URIs in applications.

- authority: An authority is the provider of content, and may provide user and port details (e.g. arne@semanticweb.com, semanticweb.com:80).

- path: The path is the main part of many URIs, though it is possible to use empty paths, e.g., in email addresses. Paths can be organized hierarchically using / as separator.

- query: The query can be recognized with the preceding ?, and are typically used for providing parameters.

- fragment: Fragments provide an additional level of identifying resources, and are recognized by the preceding #.

2.1.5 Serializations

RDF in itself offers no serialization of the graph it represents. But there are many serializations available, and more are coming as of this writing.

There are some considerations to take when choosing a serialization for a given project. One consideration is the ease for humans to read the syntax, which is very useful if you want to verify how your data is related. Another is the availability of tools to process the serialization. RDF/XML, for example, is based on XML, and as such there are many tools that can deserialize it. Turtle on the other hand is specific for RDF, and may not be as easy to deserialize. But most will agree that the latter is much easier to read and understand than the former.

2.1.5.1 RDF/XML

RDF/XML has been recommended by W3C to represent RDF since the beginning of SW [26,sec. 2.2.4]. As the name suggests, RDF/XML is based on the markup language XML. XML may not be as humanly accessible as some of the other serializations, but it is the most commonly used, probably because of the readily available software to process XML-documents.

XML is tree-based, which means some considerations need to be taken when we serialize graphs. Each statement will have the subject as the root, followed by the predicate, and then the object. As an example of this we have listing 2.5, which shows a serialization of figure 2.2.

<?xml version="1.0" encoding="utf-8"?>

<rdf:RDF xmlns:rdf ="http://www.w3.org/1999/02/22-rdf-syntax-ns#"

xmlns:foaf="http://xmlns.com/foaf/0.1/">

<rdf:Description rdf:about="http://example.org/Arne">

<foaf:knows>

<rdf:Description rdf:about="http://example.org/Kjetil">

</rdf:Description>

</foaf:knows>

<foaf:familyName>Hassel</foaf:familyName>

</rdf:Description>

</rdf:RDF>

Another reason for XML being chosen as the default serialization was that it was readily available at the time RDF was being standardized. Figure 2.6 shows a timeline of the development of XML and SW.

Listing 2.5 shows that we have namespaces in XML through the attribute rdf:xmlns. But we cannot use namespaces in values given to attributes (i.e. we have to write rdf:about="http://example.org/Arne" instead of rdf:about="Arne"). This adds to the notion that XML-documents are bigger than what we need to serialize RDF.

2.1.5.2 Turtle

Turtle defines a textual syntax for RDF that allows RDF graphs to be completely written in compact and natural text form [3]. The latest version was submitted as a W3C Team Submission 28th of March 2011. Listing 2.7 shows the serialized form of figure 2.2.

@prefix ex: <http://example.org/> .

@prefix foaf: <http://xmlns.com/foaf/0.1/> .

ex:Arne foaf:knows ex:Kjetil ;

foaf:familyName "Hassel" .

We see from the example that IRIs are written with angular brackets, literals with quotation marks, and statements ends with either a semicolon or a period. The usage of semicolon is a syntactic sugar, and enables writing the following triples without their subject, as they reuse the subject in the first statement. We can also reuse the subject and the predicate in a statement by using the comma, in essence writing a list.

The syntax @prefix is also used in the listing. This allows us to introduce namespaces, and abbreviate IRIs by prefixing them (e.g. <"http://example.org/Arne"> → ex:Arne). We also have the term @base, which also enables us to abbreviate IRIs, by writing the suffix in angular brackets (e.g. @base <http://example.org/> → <Arne>).

Turtle also supports BNs by wrapping the statements in square brackets. Listing 2.8 shows all of these syntaxes in use by serializing figure 2.3.

@base <http://example.org/> .

@prefix foaf: <http://xmlns.com/foaf/0.1/> .

<Arne> foaf:knows [

foaf:nick "Bjarne" , "Buddy" .

]

There is also syntactic sugar for writing collections. This is done by enveloping the resources as a comma-separated list in parentheses. Lastly, Turtle abbreviates common data types, e.g. the number forty two can be written 42, instead of "42"<http://www.w3.org/2001/XMLSchema\#integer>, and the boolean true can be written true instead of "true"<http://www.w3.org/2001/XMLSchema\#boolean>.

Turtle has become popular amongst the academic circles of SW, as it is a valuable educational tool because of its simplicity and readability.

2.1.5.3 N3

N3 is often presented as a compact and readable alternative to RDF/XML [8], but the syntax supports greater flexibility than the confinements of RDF (e.g. support for calculated entailment with "built-in" functions [6]).

It dates back to 1998 [25,p. 25], and currently holds status as a Team Submission at W3C, last updated 28th of March 2011. Figure 2.2 is serialized as N3 in listing 2.9.

@prefix ex: <http://example.org/> .

@prefix foaf: <http://xmlns.com/foaf/0.1/> .

ex:Arne foaf:knows ex:Kjetil ;

foaf:familyName "Hassel" .

N3 shares a lot of the syntax of Turtle, but is an extension in the regard that it has extra syntax (e.g. @keywords, @forAll, @forSome) [3,sec. 9].

2.1.5.4 N-Triples

N-Triples was designed to be a fixed subset of N3 [45,sec. 3]. It is also a subset of Turtle, in that Turtle adds syntax to N-Triples [3,sec. 8]. Serialization of figure 2.2 is given in listing 2.10.

<http://example.org/Arne> <http://xmlns.com/foaf/0.1/knows> <http://example.org/Kjetil> . <http://example.org/Arne> <http://xmlns.com/foaf/0.1/familyName> "Hassel" .

One way of looking at N-Triples is to see it as Turtle without the syntactic sugar.

2.1.5.5 RDF JSON

RDF JSON was one of the earliest attempts to make a serialization of RDF in JSON. It is designed as part of the Talis Platform6, and is a simple serialization of RDF into JSON. Figure 2.2 is serialized into RDF JSON in listing 2.11.

{

"http://example.org/Arne": {

"http://xmlns.com/foaf/0.1/knows": [ {

"value": "http://example.org/Kjetil",

"type": "uri"

} ],

"http://xmlns.com/foaf/0.1/familyName": [ {

"value": "Hassel",

"type": "literal"

} ]

}

}

RDF JSON uses the syntax provided by JSON (explained in section 2.2.4). All triples have the form { "S": { "P": [ O ] } }, where "S" is the subject, "P" is the predicate, and O is a JSON object with the following keys:

- type, required: either üri", "literal" or "bnode".

- value, required: the lexical value of the object.

- lang, optional: the language of the literal.

- datatype, optional: the data type of the literal.

2.1.5.6 JSON-LD

JSON-LD is another JSON based serialization of RDF, and is the newest serialization to be included by W3C. It became a working draft on 12th of July 20127, after being in the works for about a year by the JSON-LD CG8. It has been included in the work of the RDF WG in hope that it will become a W3C Recommendation that will be useful to the broader developer community9.

JSON-LD CG has from the start worked with the concern that RDF may be to complex for the JSON-community10, and as such has embraced LD rather than RDF. That being said, it is a goal that JSON-LD will serialize a RDF graph, if that is what the developer want to do. This is reflected in the current working draft, in that subjects, predicates and objects "SHOULD be labeled with an IRI". This does introduce the problem that valid JSON-LD documents may not be valid RDF serializations.

Another design goal of JSON-LD is simplicity, meaning that developers only need to know JSON and two keywords (i.e. @context and @id) to use the basic functionality of JSON-LD[49,sec. 2]. So how do we use these keywords? Lets look at two examples in listings 2.12 and 2.13, which serialize figures 2.2 and 2.3 respectively.

{

"@context": {

"ex": "http://example.org/",

"foaf": "http://xmlns.com/foaf/0.1/"

},

"@id": "ex:Arne",

"foaf:knows": "ex:Kjetil",

"foaf:familyName": "Hassel"

}

{

"@context": {

"ex": "http://example.org/",

"foaf": "http://xmlns.com/foaf/0.1/"

},

"@id": "ex:Arne",

"foaf:knows": {

"foaf:nick": [ "Bjarne", "Buddy" ]

}

}

In listing 2.12 we see that prefixing namespaces are featured in line 3 and 4. We also see that the subject are defined by using the proprety @id. The absence of @id creates a blank node, as shown in listing 2.13.

Another design goal of JSON-LD is to provide a mechanism that allow developers to specify context in a way that is out-of-band. The rationale behind this is to allow organizations that already have deployed large JSON-based infrastructure to add meaning to their JSON documents that is not disruptive to their day-to-day operations[49]. In practice this will work by having two JSON documents, one being the original JSON document, which is not linked, and another that provide rules as to how terms should be transformed into IRIs. Listing 2.14 shows how a serialization of figure 2.1 could be transformed into the serialization of figure 2.2.

// A non-LD JSON object

{

"Arne": {

"knows": "Kjetil",

"lastname": "Hassel"

}

}

// A JSON-LD object designed to transform the object above into a JSON-LD compliant object

{

"@context": {

"ex": "http://example.org/",

"foaf": "http://xmlns.com/foaf/0.1/",

"Arne": {

"@id": "ex:Arne"

},

"Kjetil": {

"@id": "ex:Kjetil"

},

"knows": "foaf:knows",

"lastname": "foaf:familyName"

}

}

2.1.5.7 RDFa

RDFa is another serialization that recently got promoted in the W3C-system. As of 7th of June 2012 it is a W3C Recommendation, and offers a range of documents (the RDFa Primer11, RDFa Core12, RDFa Lite13, XHTML+RDFa 1.114, and HTML5+RDFa 1.115).

RDFa makes it possible to embed metadata in markup languages (e.g. HTML), so as to make it easier for computers to extract important information. This is in response to the fact that some semantics may not be specific enough. Take the title-tags in HTML, H1-H6. Good practices suggest only using H1 one time, so that it only specifies the most important title for the page. But even so, what does the H1-tag specify title for? Is it the page as a whole, or is it the specific article on that page. With RDFa you can specify this.

The reasoning is that by making use of independently created vocabularies, the quality of metadata will increase. And by tying it into RDF, you can increase the overall knowledge of WWW.

RDFa has a syntax much to big to describe in detail here, but lets look at an example, by serializing figure 2.2 into a fracture of HTML, given in listing 2.15.

<div

vocab="http://example.org/"

prefix="foaf: http://xmlns.com/foaf/0.1/"

about="Arne">Arne knows

<span

property="foaf:knows"

resource="Kjetil">Kjetil</span>

and has last name <span

property="foaf:familyName">

Hassel</span>.</div>

Listing 2.15 shows us the use of the attributes vocab, prefix, about, property, and resource:

- vocab defines the usage of a single vocabulary for the nested terms.

- prefix allows us to introduce prefixes in case we want to mix in more vocabularies.

- about defines the subject in a triple.

- property defines the predicate in a triple.

- resource may define the object and the subject, depending on context.

2.1.6 Querying

An important feature of structured data is the possibility of querying it. You could have the users scour model in tools like a SW or RDF browser, but this can be a tedious task, and very inefficient for a machine. To query RDF we need a query language that recognizes RDF as the fundamental syntax [24,p. 192] (or rather, as the fundamental model).

2.1.6.1 SPARQL

SPARQL is the answer to the need for a query language. It exists as version 1.0, which became a W3C Recommendation 15th of January 2008, and as version 1.1, which is a working draft, last updated 5th of January 2012. Version 1.1 builds upon version 1.0, and sports features such as (all fetched from the document SPARQL 1.1 Query Language [48]):

- The query forms SELECT, ASK, CONSTRUCT, and DESCRIBE,

- Grouping, ordering, and limitation of results fetched,

- Several shortened query forms,

- Aggregation,

- Subqueries,

- Negation,

- Expressions in the SELECT clause and Property Paths,

- Assignment, and

- A large list of functions and operators.

As the most powerful version, I will use version 1.1 as the basis for this thesis, and it will be the version I refer to when referring to SPARQL.

There are four fundamental forms of read-queries in SPARQL, namely SELECT, ASK, CONSTRUCT, and DESCRIBE. The two latter returns new graphs, that can be used as basis for additional queries and manipulations (e.g. merging with other graphs).

The SELECT form enables us to query for variables, and return them in tabular form. We can project a specific list of variables we want returned, or just select all variables by using the asterisk sign.

Listing shows a very simple example of a SELECT query. If we use that query against the model in figure 2.2, we will get the table 2.1 as a result.

SELECT *

WHERE { ?subject ?predicate ?object }

| ?subject | ?predicate | ?object |

|---|---|---|

| http://example.org/Arne | http://xmlns.com/foaf/0.1/knows | http://example.org/Kjetil |

| http://example.org/Arne | http://xmlns.com/foaf/0.1/familyName | "Hassel" |

As we see from table 2.1, the query lists all triples we know in the model.

The ASK form enables us to verify whether or not certain query pattern are true or not. We could use it to ask if we know from the model in figure 2.2 whether or not there are an entity which has a given name Ärne". Listing 2.17 shows how this is done.

@prefix foaf: <http://xmlns.com/foaf/0.1/>

ASK { ?x foaf:givenName "Arne" }

In our case the result would be false.

The CONSTRUCT form enables us to derive a graph derived from other graphs. Lets look at another example in listing 2.18.

@prefix foaf: <http://xmlns.com/foaf/0.1/>

CONSTRUCT { ?x foaf:givenName "Arne" }

WHERE { ?x foaf:familyName "Hassel" }

Now, if we were to run the ASK query in listing 2.17 against the new graph, we would get the result true. And if we ran the SELECT query in listing , we would get the result in table 2.2.

| ?subject | ?predicate | ?object |

|---|---|---|

| http://example.org/Arne | http://xmlns.com/foaf/0.1/givenName | "Arne" |

The DESCRIBE form results in a single RDF graph. It differs from the CONSTRUCT form in that we do not specify which triples we want the new graph to consist of, but rather that the SPARQL query processor determines which triples that are relevant. The relevant triples depend on the data available in the graph(s) queried, but takes basis in the resource(s) identified in the query pattern.

Lets look at the query in listing 2.19, which we apply to the models in figures 2.2 and 2.3, which we have assigned to IRIs http://example.org/GraphA and http://example.org/GraphB respectively. The result could be something like the serialization shown in listing 2.20.

@prefix foaf: <http://xmlns.com/foaf/0.1/>

@prefix ex: <http://example.org/>

CONSTRUCT ?y

FROM <http://example.org/GraphA>

FROM NAMED <http://example.org/GraphB>

WHERE { ?x foaf:knows ?y }

@prefix foaf: <http://xmlns.com/foaf/0.1/> . [ foaf:nick "Bjarne" , "Buddy" . ]

The resulting graph has two triples, namely the one concerning the entity which we known has the nicks "Bjarne" and "Buddy". As there are no triples where http://example.org/Kjetil acts as the subject, we can not describe anything.

I have introduced the token FROM in the query. This syntax allows us to specify which RDF Datasets we wish to query. This syntax is optional, as the query processor will use the default graph if nothing is specified. There can be one default graph, whose IRI we override if we specify FROM without NAMED. A query can take any number (or none) of named graphs, but do not need a default graph if we have one or more named graphs.

SPARQL has a great number of features, and I can not describe them all here16. But suffice to say, SPARQL is a powerful language that enables us to ask a variety of questions regarding our data.

2.1.6.2 SPARQL Update Language

The SPARQL 1.1 specification is part of a set of documents, which comprises ten documents. One of these is the document regarding SPARQL Update Language. It introduces an extension of the SPARQL syntax that allow us to update RDF datasets. The tokens are divided into two groups, Graph Update and Graph Management. The former consists of INSERT DATA, DELETE DATA, DELETE/INSERT (with the shortcut form DELETE WHERE), LOAD, and CLEAR. The latter consists of CREATE, DROP, COPY, MOVE, and ADD.

I will not go into detail, but SPARQL Update Language delivers a great variety of terms that allows us to manipulate our graphs with SPARQL.

2.1.7 Entailment

An important feature of RDF is the ability to infer knowledge from the existing knowledge, i.e. form or entail new conclusions. This is referred to as entailment. There are multiple forms of entailments in RDF, and it supports one form "out-of-the-box". The document "RDF Semantics"17 gives details about entailment for RDF, RDFS, and D-entailment.

Other regimes are the OWL Direct Semantics18, which covers OWL DL, OWL EL, and OWL QL. There is also RIF, which outlines a core syntax for exchanging rules. The idea is to support multiple rule language, instead of the specific entailment regimes.

As entailment did not become a part of the framework implemented as part of this thesis, I will not go into greater detail at this point. I will return to entailment in section 8.1.3.1, as part of the discussion.

2.2 JS

JS begins its life in 1995, then named Mocha, created by Brendan Eich at Netscape [17,27]. It then got rebranded as LiveScript, and later on JS when Netscape and Sun got together. When the standard was written, it was named ECMAScript, but everyone knows it as JS. It quickly gained traction for its easy inclusion into web pages, but was long ridiculed by developers [15].

Douglas Crockford states in his article "JavaScript: The World's Most Misunderstood Programming Language"19 ten reasons for the confusion centering JS:

- The Name,

- Lisp in C's clothing,

- Typecasting,

- Moving Target,

- Design Errors,

- Lousy Implementations,

- Bad Books,

- Substandard Standard,

- Amateurs, and

- Object-Oriented.

Luckily there has been some changes to the list since its conception in 2001.

Point 1-5 is quite valid yet20, but can be remedied by good and educational resources for learning JS21.

Point 6 is (mostly22) not valid anymore. If the community learned anything from the browser wars, it was to work with the community through the process of standards. Ecma Internationals effort to create a specification based on the de facto standard amongst the browsers has been successful, and groups such as W3Cs HTMLWG and WHATWG drives the production of standards, and great efforts are made to increase efficiency amongst JS-engines. Another testimony to the fact that implementations are increasingly popular are the efforts to use JS as a programming language outside the browser (described in section 2.2.7).

Point 7 depends on your view of good books, and although there is much left to desire, there are some good books out there23. But more importantly, there are several efforts to deliver resources of high quality to educate developers in JS. These resources are increasingly - perhaps fittingly - web-based. There is also an increase of interest on conferences that target developers24.

Point 8 is left to be discussed (I have not read and analyzed the 440 pages that ECMAScript version 3 and 5 consists off), but the implementation of the standards seem to suggest that this point is not so valid anymore.

JS is increasingly becoming part of the professional world, adaptations into conferences being one of the arguments suggesting this trend. You also have examples of major companies either supporting or developing JS-libraries25. This would suggest that point 9 is not the case anymore26.

Point 10 is still valid, as it can be difficult for developers trained in conventional object-oriented languages like Java and C#. Again, as with point 1-5, this is remedied by proper, educational resources, that developers can turn to when puzzled by the intricacies of JS.

JS may be a greatly misunderstood language even today, but it seems to have a lot going for it. The fact that it is the de facto programming language for the web puts it into a position worthy of respect, and should be regarded as a resource which can be used for many great things.

2.2.1 Object-Oriented

JS is fundamentally OO as objects are its fundamental datatype [19,p. 115]. It treats objects different than many other programming languages though, as it does not have classes and class-oriented inheritance. There are fundamentally two ways of building up object systems, namely by prototypical inheritance (explained in section 2.2.1.1) and by aggregation (explained in section 2.2.1.2) [15].

Another design feature is its support of the functional programming style, by treating functions as first-class objects. This feature is explained thoroughly in section 2.2.1.3.

The level of object-orientation in JS is shown in that even literals (i.e. all primitive values except undefined and null) can be treated as objects. They are, however, immutable, and does not share the dynamic properties that "normal" objects in JS do. JS handles this by wrapping the values into their respectively object-type (e.g. String, Number, and Boolean). An example showing this is shown in listing 2.21.

var stringObject = new String('foo');

console.log(stringObject.length); // logs 3

var stringLiteral = 'foo';

console.log(stringLiteral.length); // logs 3

Other objects that are somewhat different from the norm is the Array- and Math-object, the former representing a list of values and the latter sporting a set of static methods.

Objects in JS do not need classes to be instantiated. But it is possible to emulate classes in JS though, as it helps us use class-depended features (e.g. some SDPs), and an example is shown in listing 2.22.

var MyClass = function () {

this.myProperty = 42;

this.myMethod = function (value) {

return value + this.myProperty;

};

};

var myObject = new MyClass();

console.log(myObject.myMethod(1295)); // logs 1337

2.2.1.1 Prototypical Inheritance

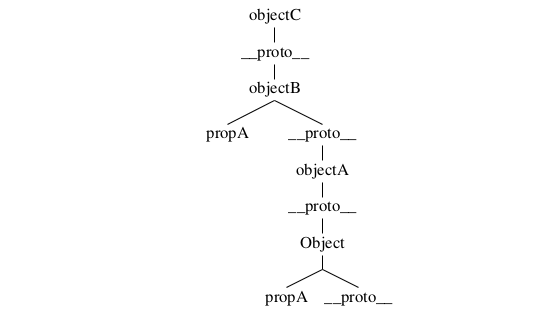

At the heart of all object-handling in JS is Object. All objects inherit this object if nothing else is specified, and it is there we find the default properties and methods that are shared by all objects. We can manipulate which object we want our objects to inherit, and as such can create a hierarchy of objects. Listing 2.23 show some examples of inheritance. In it we see how we can initiate objects, and how we can assign them to inherit other objects.

var objectA = {},

objectB = new Object(),

objectC = Object.create(objectB)

objectB.__proto__ = objectA;

Object.propA = 42;

objectB.propA = 1337;

console.log(objectA.propA, objectC.propA); // logs 42, 1337

The simple secret behind prototypical inheritance is that all objects have the property __proto__. When a property or method is called, JS will search for the called element by traversing the objects' properties, and if not found, it will continue with the prototype. We can visualize the structure in listing 2.23 as a tree, and have done so in figure 2.24.

So when we call objectA.propA, JS will check if objectA has the property propA. As it has not it will continue to its prototype, which is Object. Now, as Object has the property propA, JS will return its value, which is 42 in our case. But if we call objectC.prop, it will not have to go longer than objectB to see that there is a property that matches its search.

A last note is that Object also have the property __proto__. This can also be manipulated, but JS will take care so that we do not run into an infinite loop when looking for properties that does not exist (it is also considered a bad practice (i.e. an anti-pattern) to manipulate the prototype of Object).

2.2.1.2 Dynamic Properties

All mutable objects in JS can be manipulated at run-time. This we also see in listing 2.23, as we add the property propA in line 5 and 6. Objects are basically containers for key-value entities, where the key is a string. In this regard, objects in JS can be regarded as maps, or dictionaries.

We can at any time manipulate existing properties by replacing its values or delete the key altogether. We can also manipulate objects that are prototyped, and the objects that inherit will also be affected. The internal works of this is that JS creates a reference in memory for variables that are set as objects. If those variables where to be set to other variables, the reference would be copied, not the values contained within.

A note on mutability and immutability: JS differentiate between primitive values and object. The former are immutable, while the latter is mutable. ECMA5 offers three new functions that alter this behavior, namely the properties seal, freeze, and preventExtensions in Object (with the responding isSealed, isFrozen, and isExtensible to test whether or not these are set) [17,p. 114-115]. Explaining how these functions are outside the scope of this thesis, but suffice to say is that ECMA5 adds some spice to the mutable properties of JS-objects.

2.2.1.3 Functional Features

All functions are treated as first-class objects, and as such can be manipulated as any other object. It can also be passed around as variables, and this opens for some nifty features. By passing a function as a parameter, we can call that function whenever we want, e.g. after we have loaded a set of resource. This asynchronous feature is explained in depth in section 2.2.5.

Functions can be instantiated in many ways, as shown in listing 2.25. A function consists of three elements [19,p. 164]:

- Name: An identifier that names the function (optional in function definition expressions).

- Parameter(s): A pair of parentheses around a comma-separated list of zero or more identifiers.

- Body: A pair of curly braces with zero or more JS-statements inside.

function functionA (x) { return x; };

var functionB = function (x) { return x; },

functionC = function functionD (x) { return x; },

functionE = new Function("x", "return x;");

console.log(functionA(42), // logs 42

functionB(42), // logs 42

functionC(42), // logs 42

functionD(42), // throws ReferenceError: functionD is not defined

functionE(42)); // logs 42

All types in listing 2.25 support these requirements, albeit a little differently. Line 1 shows a named function, while the other two are anonymous. Anonymous functions are called through their reference, i.e. the variables they are set to. Named functions is referable by their names, if not they are set to a variable, in which case it will be referable by the variable (line 9 shows what happens if you call the function by its name when its set to a variable).

Functions of the types listed in line 1-3 can be used as constructors for new objects, while the one in line 4 can be used as a prototype. A simple example of this is shown in listing 2.26. It introduces the use of this, which will be explained in section 2.2.2.

function ObjectA (x) {

this.x = x;

this.methodA = function (y) {

return this.x + y;

};

}

var A = new ObjectA(1300);

console.log(A.methodA(37)); // logs 1337

2.2.2 Scope

The way JS handles the scope may be confusing to developers coming from class-oriented programming languages. JS does not contain syntax such as private of protected for use with variables, but it supports private variables for objects. It does so in the way it handles the context functions are part of (e.g. the scope).

Functions in JS can be nested within other functions, and they have access to any variables that are in scope where they are defined. This means that JS-functions are closures, and it enables important and powerful programming techniques [19].

If a variable is not set as a property in an object, it will be a part of the global object. The global object in JS depends on which environment it is run in, but in most browsers it is represented by the object window. This has some consequences, like the fact that usage of the syntax-element var is optional; it will become a key-value entity in the scope in which it is declared, which is the global object if nothing else is specified. This is exemplified in listing 2.27.

var x = 42; y = 42; window.z = 42; console.log(x, y, z); // logs 42 42 42

2.2.2.1 Closure

Lets review a simple example of closure, given in listing 2.28. In this example we have two functions, one which works as a constructor, and another that merely calls a function it has been given as parameter. When we pass a.getValue to functionA, JS also include the context which that method runs in, in effect creating a closure.

var ObjectA = function (val) {

this.val = val;

this.getValue = function () {

return this.val;

}

},

functionA = function (getFunc) {

return getFunc();

};

var a = new ObjectA(42);

console.log(functionA(a.getValue)); // logs 42

This feature is increasingly used in JS-libraries, and is getting a lot of appraise from the community. But it is also a headache for many aspiring JS-developers, as it may be a bit difficult to wrap your head around (and use correctly). Lets look another example of what may go wrong, given in listing 2.29. In this example we try to access this.val inside functionAA. But as functionAA is not part of the scope of functionA, and thereby not being a part of the closure given to functionB, we fall back to calling on the global object. Since the global object does not have a property named val, it will return undefined.

var functionA = function (val, func) {

this.val = val;

function functionAA () {

return this.val;

}

return func(functionAA);

},

function B = function (func) {

return func();

};

console.log(functionA(42, functionB)); //logs undefined

2.2.3 Static functions

JS supports static functions in that all functions are treated as objects, and by extension can be extended with methods. Listing 2.30 illustrates an example of this.

var funcA = function (val) { this.val = val; },

objA = new funcA(1337);

funcA.funcB = function () { return 42; }

funcA.funcC = function () { return this.val; }

console.log(funcA.funcB()); // logs 42

console.log(objA.funcC()); // throws TypeError

console.log(funcA.funcC.call(objA); // logs 1337

Note that static functions are not accessible as methods in objects constructed with the parenting functions as constructor. But we can manipulate the scope of the functions by overloading this (with call) to be the object we wish to refer to, as shown on line 7.

2.2.4 JSON

JSON is a lightweight, text-based data interchange format. It is originally based on JS, but is language-independent[16]. It was specified by Douglas Crockford in RFC 4627, and enjoys support in most major programming languages.

JSON consists of literals that are either false, null, true, an object (i.e. collections of key-value pairs), an array (i.e. lists), a number, or a string [16]. Listing 2.31 shows some examples of valid JSON-objects, as well as some structures that are not valid JSON.

// valid, can all be parsed by JSON.parse

var goodA = '42',

goodB = '{ "a": 42 }',

goodC = '[ 1337, { "a": 42 }]';

// invalid, will all make JSON.parse throw a SyntaxError

var badA = '', // unexpected end of input

badB = 'function (x) { return x; }', // unexpected token u

badC = '{ "a": new Object() }'; // unexpected token e

JS supports JSON by default (given in ECMAScript Language Definition [17]).

2.2.5 Asynchronous Loading of Resources

Asynchronous loading or resources are common in browsers. Normal HTML documents normally externalize much of its CSS and JS functionality, as dictated by good practices. Those resources are loaded by the browser by default, without too much hassle. But when it comes to making use of the browsers API (i.e. the ones available to JS) to load resources asynchronously, it becomes another game entirely.

2.2.5.1 SOP

As with many issues, handling external resources are difficult in JS because of security issues. And justifiable so, as JS becomes an increasingly powerful programming language, so are the possibilities to abuse it. Users of WWW are increasingly used to insert personal information, and if we cannot trust owners of web pages to control what is being run on their site, then there would be a lot of issues with trust on the web27.

Perhaps the most important security concept within modern browsers is the idea of SOP28. Although there is no single SOP governing how browsers implement it, the idea is that resources that do not share the same origin (i.e. having the same scheme, host, and port in the IRI (concepts explained in section 2.1.4.2)) are isolated from each other.

It is possible to circumvent SOP in JS by inserting a script-tag referring to an external file. This technique is used by JSONP, which allows JSON residing in external files to be loaded during run-time.

2.2.5.2 CSP

Another way of handling security concerning external resources is CSP. CSP is in the works (Working Draft at W3C29), and as an incomplete standard it may be prone to changes. But the basic idea is to let developers whitelist external resources. The policy is first and foremost being designed to be part of the HTTP response header, but there is also work on letting it be a part of HEAD in a HTML document, as a META tag.

2.2.5.3 XHR

XHR has been part of the world of browsers for a while. It was conceived by Microsoft in their work on Microsoft Exchange Server 2000, and was later ported by Mozilla. It was overlooked for quite a while, until AJAX became a trend, as developers understood the power it had to load resources asynchronously (and synchronously, if needed).

XHR2 is a Working Draft as of this writing, but introduces several features requested by the community, allowing cross-domain fetching of resources being one of them. To allow this, it makes use of another standard which is in the making, namely CORS30. This technology is already available in some browsers. But its inherit problem is that it requires domain-owners to add information to their HTTP headers.

Another technology developed to fetch resources across domains are XDR. But as it was not included in the framework, I have let it be a part of the discussion in section 8.1.4.1.

2.2.6 CJS

CommonJS is a volunteer-driven project31 aiming to standardize and implement specifications that expand the functionality of JS. Specifications include handling of modules, unit testing, packaging, I/O, handling of binary data, and much more. We have included details concerning three of these specifications (the promise pattern, section 2.2.6.1 and the module patterns AMD and CommonJS Modules, sections 2.2.8.3 and 2.2.8.4), as they have been included in the framework.

2.2.6.1 Promise Pattern

The promise pattern is titled Promises/A by CommonJS32. It is also referred to as Deferred, and works by having an object represent a promise. The promise consists of a result that will be returned at some time in the future, and in the meantime, the run-time will continue evaluating the rest of the sourcecode. This can be set up so that when the result is ready, a function is called with the result sent as parameter. This allows for some proper handling of asynchronous functionality.

Listing 2.32 shows some examples of the API. A central point of these examples are that the functions passed as parameters to the then-function are called as soon as the promise are resolved, i.e. detached from the order in which they were called in the code.

// When is available as a global variable

var promiseA = When.defer(),

promiseB = When.defer();

setTimeout(function () { promiseA.resolve(42); }, 2000));

setTimeout(function () { promiseB.resolve(1337); }, 1000));

// Preparing single promises

promiseA.then(function (result) {

console.log(result); // logs 42 after 2000 milliseconds

});

promiseB.then(function (result) {

console.log(result); // logs 1337 after 1000 milliseconds

});

// Preparing multiples promises

When.all([ promiseA, promiseB ], function (results) {

console.log(results); // logs [ 42, 1337 ] after 2000 milliseconds

});

2.2.7 Server-side implementations

As JS has become an increasingly popular programming language, so has its use outside of the browser. One of these branches is the use of JS for server-side web-applications. As part of this thesis I have only used one such implementation as a run-time environment for my TDD. A more in-length discussion of the matter can be found in section 8.1.5.

2.2.8 Module Patterns

JS is a flexible language, and one area in which this is very clear is when it comes to module handling. This is not a surprise, as handling variables and ensuring they are not compromised by code elsewhere in the application is harder than you might think. As such, "modules are an integral piece of any robust application's architecture and typically help in keeping the units of code for a project both cleanly separated and organized" [29].

This section will describe some of the patterns of module handling I have found during my research.

2.2.8.1 Contained Module

The Contained Module pattern is designed to encapsulate private variables and return an explicit object with public methods that can work with the private variables. It was made popular by Douglas Crockford, and is used extensively in smaller libraries. Listing 2.33 shows an example using this pattern.

var myModule = (function () {

var myPrivateVariable = 42;

function myFunction () {

return myPrivateVariable;

}

return {

myPublicFunction: myFunction

};

})();

console.log(myModule.myPublicFunction); // logs 42

The problem with this pattern is that it does not really address how to combine several modules. For that we turn to the other patterns.

2.2.8.2 Namespaces

The simplest way structure several modules is to follow the Namespaces pattern. An example of it can be seen in listing 2.34.

var OurNamespace = {};

// in another file, called after the above code has been evaluated

(function (ns) {

ns.anotherLevel = {};

})(OurNamespace);

// another file yet again, called after the above code

(function (ns) {

ns.anotherLevel.ourFunctionalModule = function () { /* ... */ };

})(OurNamespace);

It requires the developer to include the modules in correct order, which can be troublesome. The one single argument to use this is that it is supported out-of-the box, as it does not depend on any extra functionality than the one inherent in browsers.

2.2.8.3 AMD

The AMD pattern is titled Modules/Async/A by CommonJS33. Its overall goal is to provide a solution for modular JS that developers can use today[29]. Essentially it makes use of the functions define and require. The former defines a module, while the latter enables us to load dependencies that the module requires. Listing 2.35 shows an example.

define([

"dependentModuleA",

"dependentModuleB"

], function (depModA, depModB) {

function privateFunction () {

/* This function is not publicly available by other modules */

}

return { /* This object becomes available to other modules */

myPublicFunction: function () { /* ... */ }

};

});

AMD allows us to split our functionality into modules and easily load components as they are needed, in run-time. This in turn leads do a more decoupled code base, making it easier to make modules reusable. But it may also increase the loading time required, as each module requested fires a HTTP request. Which consequences this has for the framework is further discussed in section 8.1.2.

2.2.8.4 CommonJS Module

Another pattern to emerge from the CommonJS community the CommonJS Module pattern. It makes use of the functions require and exports. An example is given in listing 2.36.

var moduleDependency = require("moduleWeAreDependentOn");

function privateFunction () {

/* This function is not publicly available by other modules */

}

exports.myModule = { // this object is available to other modules

myPublicFunction: function () { /* ... */ }

};

CommonJS also allow modules to be loaded asynchronously34, and in many regards resembles AMD a lot. AMD and CommonJS Module differ in which environment they cater to. AMD is mostly being used by client-side projects, while CommonJS Modules is used by server-side projects. That said, both types can be used on either sides, and it becomes merely a question of taste.

2.2.8.5 Harmony

Last, we have the modular pattern that is to be part of the sixth edition of EcmaScript, a.k.a. ES.next, a.k.a. Harmony. This pattern makes use of new syntax, and an example can be seen in listing 2.37.

module moduleA {

export var functionA = function () { /* ... */ }

export var objectA = { /* ... */ }

export var propertyA = 42;

}

module moduleB {

import functionA, objectA, propertyA from moduleA;

// equivalent to the above: import * from moduleA;

}

This syntax is not available in standard browsers yet, as it is still subject to change, however it is available for experimentation through tools such as traceur-compiler35 and esprima36.

2.3 SDP

Patterns were originally conceptualized as an architectural concept by Christopher Alexander, who wrote:

Each pattern describes a problem which occurs over and over again in our environment, and then describes the core of the solution to that problem, in such a way that you can use this solution a million times over, without ever doing it the same way twice [1,p. x].

Alexander's work inspired amongst others Kent Beck and Ward Cunningham, who in 1987 presented the report "Using Pattern Languages for Object-Oriented Programs"37 on OOPSLA-87. They outlined the adaptation from Pattern Language to object-oriented programming, and summarized a system of five patterns that they had successfully used for designing window-based user interfaces.

SDPs did not become popular before the publication of Design Patterns by Erich Gamma, Richard Helm, Ralph Johnson, and John Vlissides (often known as GoF) in 1994. They generalized patterns to have four essential elements (all quoted in shortened from Design Patterns [22,p. 3]):

- Pattern name: A handle which we can use to describe a design problem, its solutions, and consequences in a word or two. A pattern name is useful as a higher level of abstraction, increases our pattern vocabulary, and eases communication in social contexts.

- Problem: Each pattern is designed to handle a specific problem, and this part tells us when it is appropriate to use a specific SDP.

- Solution: This part explains in detail how to solve the given problem by explaining the elements that make up the design, their relationships, responsibilities, and collaborations.

- Consequences: All implementations have consequences, and this part tells us what results and trade-offs we may expect from applying the pattern. Consequences may be how the pattern affects a system's flexibility, extensibility, or portability.

In their book they also design a classification scheme that aims to enable developers to refer to families of SDPs. One categorization is by purpose, which can be either creational, structural, or behavioral. The second categorization is by scope, which can be classes or objects. SDPs that are related to this thesis have been classified in table 2.3.

| Purpose | ||||

|---|---|---|---|---|

| Creational | Structural | Behavioral | ||

| Scope | Class | Adapter | Interpreter | |

| Object | Builder | Adapter | Observer | |

| Prototype | Bridge | Strategy | ||

| Composite | ||||

| Decorator | ||||

| Facade | ||||

| Proxy | ||||

At this point I need to make two points clear. The first is that as JS is a class-less programming language, the categorization class might be a bit off. But remember that we can emulate classes in JS, and this allows us to make use of the class-categorized patterns. The other point is that JS does not support interfaces. Interfaces can be emulated (at the cost of complexity), but is not anything more than a construct that checks whether or not a list of properties is set at run-time. It is on the base of this that I have excluded the use of interfaces in this thesis, falling back to merely describing the abstractions of participants, and how they are represented in the code samples38.

GoF continues to describe a consistent format for describing SDP, which including Pattern Name and and Classification, Intent, Also Known As, Motivation, Applicability, Structure, Participants, Collaborations, Consequences, Implementation, Sample Code, Known Uses, and Related Patterns. Using all of these labels takes a lot of pages, and in this thesis I have limited myself to a description of the pattern along with a figure and an example in JS.

2.3.1 Adapter

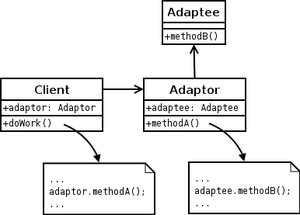

The Adapter pattern "convert the interface of a class into another interface clients expect" [22]. This pattern is useful when one wishes to make use of third party libraries without modifying them. In its classic form, the Adapter pattern are both a class and an object pattern, where the former makes of subclassing, while the latter forms a reference to the components it adapts, thereby routing requests.

In listing 2.39 I have shown examples of both. Line 4 shows subclassing through Object.create, which enables derivatives of AdapterClass to make use of the methods in the Original object. Line 5 shows the constructor function that returns an object that refers to the Original object, and thereby allows routing of calls.

I have not made use of the Adapter pattern in Graphite, a point I return to in section 8.2.1.1 in the discussion.

var Original = {

originalMethod: function (options) { /* ... */ }

},

AdapterClass = Object.create(Original).

AdapterObject = function () {

return Object.create({

adapterMethod: function (paramA, paramB) {

return this.target.originalMethod({ a: paramA, b: paramB });

}

}, {

target: { value: Object.create(Original); }

});

};

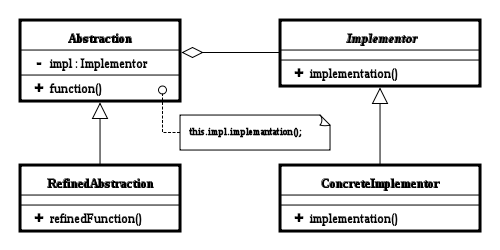

2.3.2 Bridge

The Bridge pattern "decouple an abstraction from its implementation so that the two can vary independently" [22]. The pattern is actually often used in JS in terms of event handling, using code such as the one in listing 2.41. In that case, the abstraction is that a function is to be called when a specific button is called, the refined abstraction is the actual functions. The implementor on the other hand is a function that takes the id to the button to be handled, and the abstraction that is to be coupled. The concrete implementor is the function handleClick, which configures the setup needed.

We could have implemented another abstraction, namely making sure that whatever was passed as handleClick's first parameter was an object that supported the onclick property. This way, I could have removed the limitation of sending just strings of ids, e.g. passing the object returned from document.getElementByClass("buttons").

var cancelFunction = function () {

console.log("Cancel was clicked");

},

submitFunction = function () {

console.log("Submit was clicked");

},

handleClick = function (buttonId, func) {

document.getElementById(buttonId).onclick = function () {

func();

return false;

};

};

handleClick("CancelButton", cancelFunction); // When clicked, will log "Cancel was clicked"

handleClick("SubmitButton", submitFunction); // When clicked, will log "Submit was clicked"

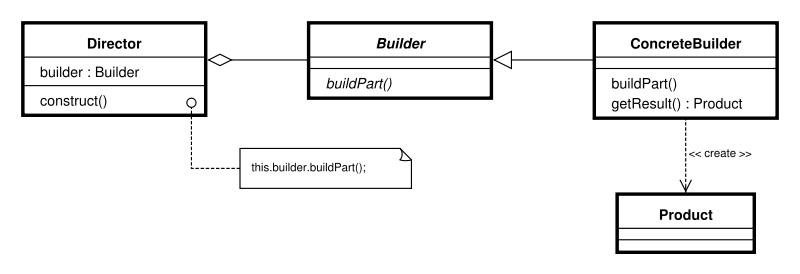

2.3.3 Builder

The Builder pattern "separate the construction of a complex object from its representation so that the same construction process can create different representations" [22]. A good example of this is the way jQuery allows us to construct DOM elements (listing 2.42).

var paragraph = $("<p>"),

titleWithText = $("<h1>Our title</h1>"),

inputWithAttr = $('<input type="password" />');

These lines should be very easy to read for developers familiar with HTML, and handles a lot of logic that is run behind the scene (e.g. the use document.createElement, adding attributes, and text).

Now, lets look at listing 2.44 for my own version of a DOM-builder (a very limited version, i.e. it only support one level of element). I have removed parts of the code, as they unnecessary to understand how the pattern works. The participants are DOMCreator (the Director), DOMBuilder (ConcreteBuilder), and DOMElement (the Product). The code works in following steps:

- We pass to DOMCreator the string we want parsed.

- DOMCreator creates an instance of DOMBuilder, and passes along the tag.

- DOMBuilder creates an instance of DOMElement, and sets the tag.

- DOMCreator parses attributes, if any, and passes them to DOMBuilder.

- DOMBuilder adds attributes to the DOMElement.

- DOMCreator parses text, if any, and passes it to DOMBuilder.

- DOMBuilder adds text.

After these steps, the client can fetch the element by calling getElement on DOMCreator.

var DOMElement = {

attributes = {},

tag = null,

text = ""

},

DOMBuilder = function (tag) {

this.element = Object.create(DOMElement);

this.element.tag = tag;

this.addAttribute = function (key, value) {

this.element.attributes[key] = value;

}:

this.addText = function (text) { this.element.text = text; };

},

tokens = {}, // a map of tokens to parse

fetch = function (str, token) {}, // returns specified type of token

remove = function (str, token) {}, // removes token, returns modified string

test = function (str, token) {}, // tests for specific token, return boolean

DOMCreator = function (str) {

var key, tag, text, value;

// fetches the tag

this.builder = new DOMBuilder(tag);

while (test(str, tokens.whitespace)) {

// fetches key-value pair of attributes, if any

this.builder.addAttribute(key, value);

}

if (!test(str, tokens.slash)) {

// fetches text, if any

this.builder.addText(text);

}

// We have what we need

};

DOMCreator.prototype.getElement = function () {

return this.builder.element;

}

var element = new DOMCreator("<p>42</p>");

console.log(element.getElement()); // logs { attributes: {}, tag: "p", text: "42" }

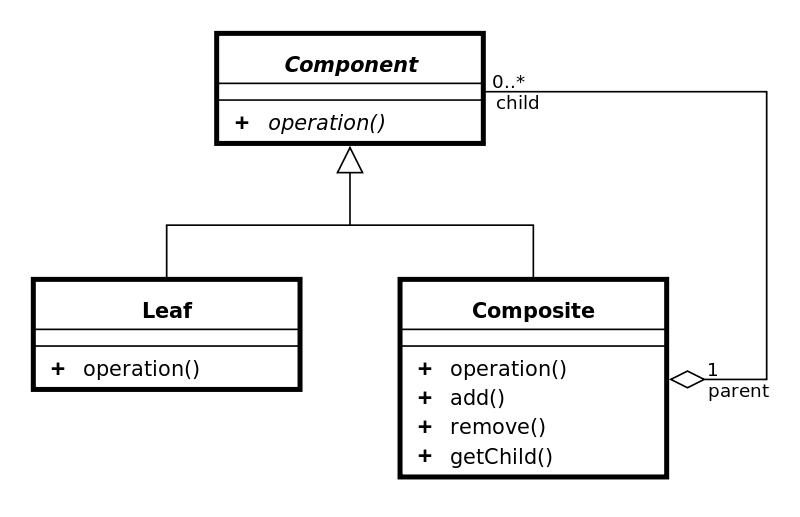

2.3.4 Composite

The Composite pattern "compose objects into tree structures to represent part-whole hierarchies."[22]. This is a method of abstracting the types of a complex structure, and streamlining certain procedures. In listing 2.46 I have continued with the DOM, and created a structure that represents DOM elements that can be used to generate HTML.

In this example we have two Composites (DOMComposite, DOMElement) and one Leaf (DOMText). The client gets the HTML by calling the method getHtml on any of the elements desired, and they will take care of producing the result from all nested, if any, elements.

var DOMComposite = function (children) {

this.children = children;

},

DOMElement = function (tag, content) {

this.tag = tag;

this.content = content;

},

DOMText = function (text) {

this.text = text;

};

DOMComposite.prototype = {

addChild: function (element) {

this.children.push(element);

},

getHtml: function () {

var child, html = "";

for (child in this.children) {

html += child.getHTML();

}

return html;

}

}

DOMElement.prototype.getHtml = function () {

var text = "<" + this.tag;

if (this.content) {

return text + ">" + this.content.getHtml() + "</" + this.tag + ">";

}

return text + " />";

};

DOMText.prototype.getHtml = function () {

return this.text;

}

var text1 = new DOMText("42"),

text2 = new DOMText("1337"),

composite1 = new DOMComposite([ text1 ]),

element1 = new DOMElement("span", text2),

composite2 = new DOMComposite([ composite1, element1 ]);

console.log(composite2.getHtml()); // logs "42<span>1337</span>"

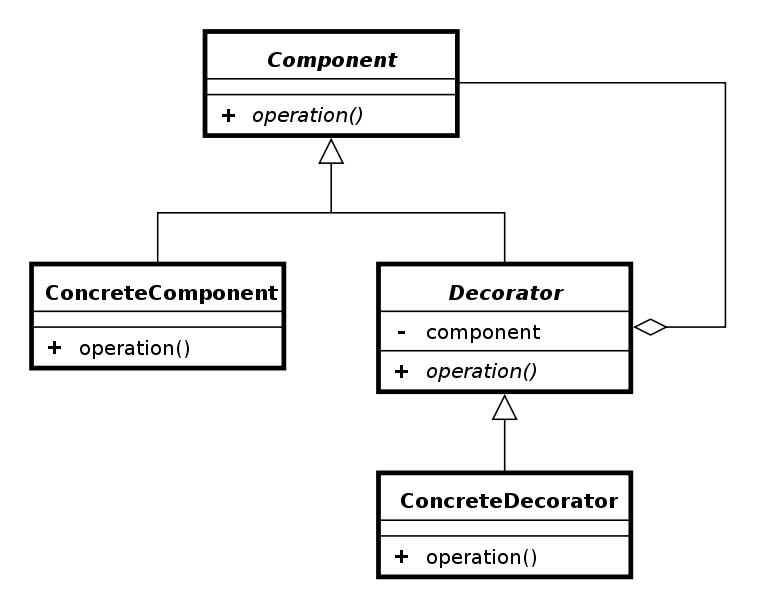

2.3.5 Decorator

The Decorator pattern "attach additional responsibilities to an object dynamically" [22]. As JS is dynamic in its nature, this is not a very difficult pattern to implement. In listing 2.48, I have simplified the example used by Addy Osmani in his book Learning JavaScript Design Patterns39.

To use the participants in figure 2.47, we have PC as ConcreteComponent, and addMemory, addScreen, and addKeyboard as the ConcreteDecorators.

var PC = { cost: function () { return 1000; } },

addMemory = function (PC) {

return PC.cost: function () { PC.cost() + 300; };

},

addScreen = function (PC) {

return PC.cost: function () { PC.cost() + 30; };

},

addKeyboard = function (PC) {

return PC.cost: function () { PC.cost() + 7; };

},

myPC = Object.create(PC);

addMemory(myPC);

addScreen(myPC);

addKeyboard(myPC);

console.log(myPC.cost()); // logs 1337

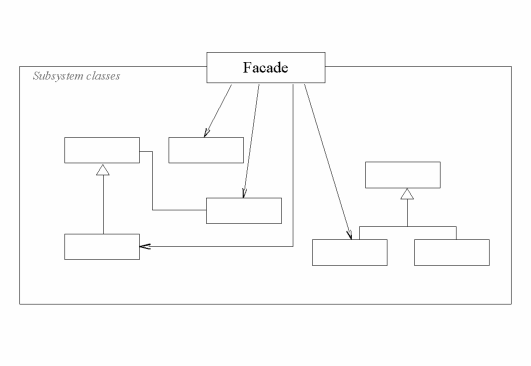

2.3.6 Facade

The Facade pattern "provide a unified interface to a set of interfaces in a subsystem" [22]. Again, jQuery shows us an example of design pattern, as the constructor of the jQuery-object applies the Facade pattern. It is usually used to simplify the API the user have to concern himself/herself with, by delivering a subset of methods from underlying modules.

In listing 2.50, I have designed an object that takes the libraries jQuery and when.js, and delivers a new interface that taps into some of their functionality. The facade in the example have one method, namely load, and promises to load the callback functions in the order they are used (i.e. http://example.org/1337 will not be loaded before http://example.org/42 has completed).

// assumes $ and When are global variables

var facade = (function (jQuery, When) {

var promise = null;

function load (uri, callback) {

promise = When.defer();

jQuery.get(uri, {}, function () {

promise.resolve(arguments);

callback.apply(this, arguments);

});

}

return {

load: function (uri, callback) {

if (promise) {

promise.then(function () {

load(uri, callback);

});

} else {

load(uri, callback);

}

}

};

}($, When));

facade.load("http://example.org/42", function () {

console.log(42);

});

facade.load("http://example.org/1337", function () {

console.log(1337);

});

// Console will always log 42 first, 1337 second

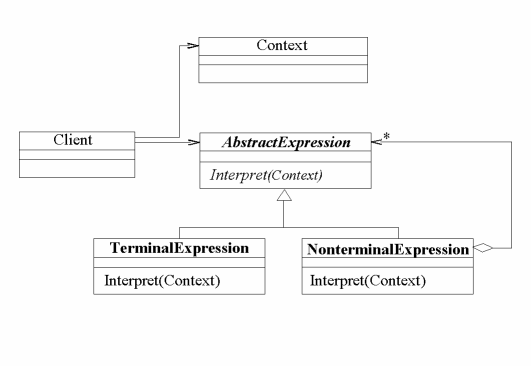

2.3.7 Interpreter

The Interpreter pattern takes a given language and "define a representation for its grammar along with an interpreter that uses the representation to interpret sentences in the language" [22].

In my simple example I want to be able to parse simple equations, using the following rules:

- Legal tokens are plus, minus and numbers, and these tokens are represented as expressions.

- It reads the equation from left to right.

- No whitespace allowed.

- The plus and minus expression take the its left expression as parameter, and expects a number to come after it (e.g. "1+44-3" and "-2+3" are both allowed, but "2++3" is not).

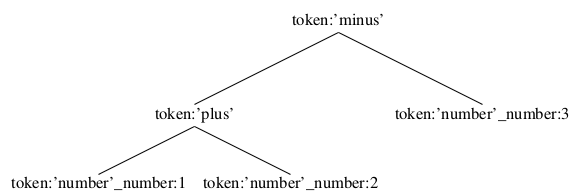

The result is an object with a tree-structure consisting of my grammar. E.g. the equation "1+2-3" would look like figure 2.51.

function parseEquation = function (equation) {

var grammar = {

minus: function (left, right) { return { token: 'minus', left: left, right: right }; },

number : function (number) { return { token: 'number', number: number }; }

minus: function (left, right) { return { token: 'plus', left: left, right: right }; },

},

tokens = {

minus = {

expression: /^-/,

evaluate: function (base) {

var right = tokens.number(base);

base.eq = base.eq.substring(1);

return grammar.minus(base.left, right);

}

},

number = {

expression: /[0-9]+/,

evaluate: function (base) {

var value = this.expression.exec(base.eq)[0];

base.eq = base.eq.substring(value.length);

return grammar.number(value);

}

},

plus = {

expression: /^+/,

evaluate: function (base) {

var right = tokens.number(base);

base.eq = base.eq.substring(1);

return grammar.plus(base.left, right);

}

}

};

this.left = grammar.number(0);

this.eq = equation;

while (this.eq !== "") {

if (tokens.minus.expression.test(this.eq)) this.left = tokens.minus.evaluate(this);

else if (tokens.number.expression(this.eq)) this.left = tokens.number.evaluate(this);

else if (tokens.plus.expression.test(this.eq)) this.left = tokens.plus.evalue(this);

else throw new Error("No valid expression");

}

return this.left;

}

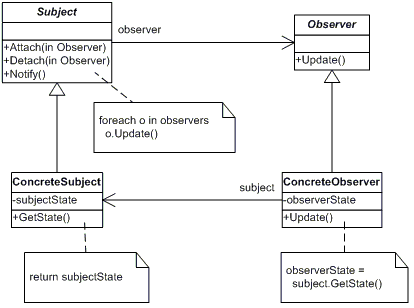

2.3.8 Observer

The Observer pattern "define a one-to-many dependency between objects so that when one object changes state, all its dependents are notified and updated automatically" [22]. This is useful when objects are dependent to know when a dependency changes state, as they will be notified when change happen.

I have included a simple example in listing 2.55. This can be evolved into context-aware notifies, so that observers only are notified when certain things happen. Note that I have made use of closure (section 2.2.2.1) in this example, as the object called upon in line 22 actually is the object first passed in the obsObject construct-function (line 19).

var obsSubject = function () {

this.observers = [];

this.value;

this.addObserver = function (observer) {

this.observers.push(observer);

};

this.notify = function () {

var observer;

for (observer in this.observers) {

observer.update();

}

};

this.getValue = function () { return this.value; };

this.setValue = function (val) {

this.value = val;

this.notify();

};

},

obsObject = function (subject) {

subject.addObserver(this);

this.update = function () {

console.log("New value: " + subject.getValue());

}

},

mySubject = new obsSubject(),

myObj1 = new obsObject(mySubject),

myObj2 = new obsObject(mySubject);

mySubject.setValue(42); // logs "New value: 42" two times

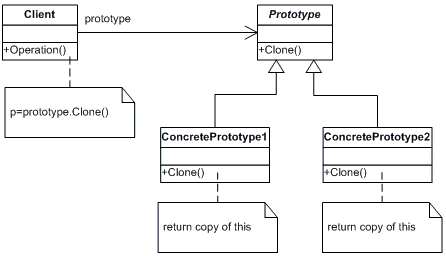

2.3.9 Prototype

The Prototype pattern "specify the kinds of objects to create using a prototypical instane, and create new objects by copying this prototype" [22]. The variation from how JS handles prototypical inheritance (section 2.2.1.1) is slight, as JS handles prototyping by copying the reference to the prototype object, while the pattern copies the whole thing.

The example given in listing 2.58 shows this in action. Line 18 shows what happens if you compare the prototype object of two objects that have been created using Object.create in JS, compared to what happens if you compare the cloned objects. The references are different.

The pattern is not specified in the description of the modules in Graphite, but is included here to show how it differs from prototypical inheritance. It has been used throughout the framework, although as the function named extend (that resides in the Utils module). Most often it is used in pair with the parameter option given to functions that offer slight variations from its default behavior. An example is given in listing 2.56.

// assumes a global function extend that functions like the Prototype pattern

var myConfigurableFunction = function (options) {

var defaultConfig = extend({

configurationA: true,

configurationB: 42

}, options);

/* Rest of the functions body */

};

myConfigurableFunction({ configurationB: 1337 }); // overwriting the default value 42

var Prototype = {

methodA: function () { /* ... */ },

objectA: { /* ... */ },

propA: { /* ... */ }

};

function clone (obj) {

var o = {}, key;

for (key in obj) {

if (typeof obj === "Object") o.__proto__[key] = clone(obj[key]);

else o.__proto__[key] = obj[key];

}

return o;

}

var a = Object.create(Prototype),

b = Object.create(Prototype),

c = clone(Prototype),

d = clone(Prototype);

console.log(a.__proto__ === b.__proto__); // logs true

console.log(c.__proto__ === d.__proto__); // logs false

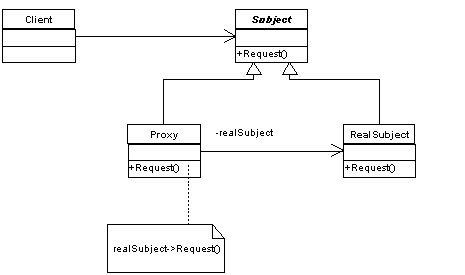

2.3.10 Proxy

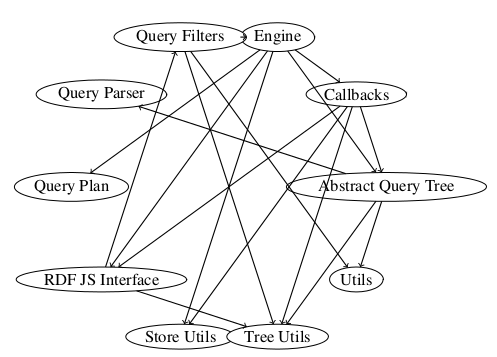

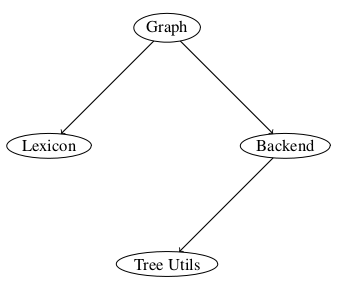

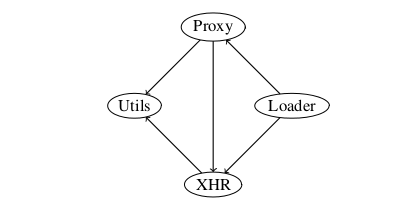

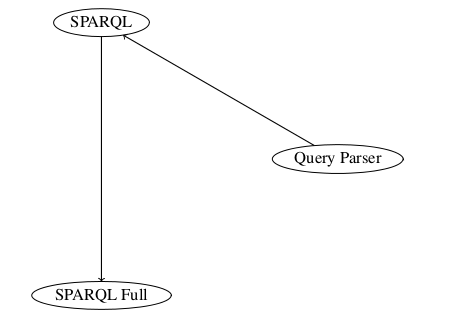

The Proxy pattern "provide a surrogate or placeholder for another object to control access to it" [22]. There are several kinds of proxies, like the virtual proxy (works like a lazy instantiator, i.e. only creating the proxied object when you need it), remote proxies (proxies an object on a remote destination), and controlling proxies (to handle access), and they may be combined.